August 25, 2000: Intel Developers Forum

August 25, 2000: Intel Developers Forum

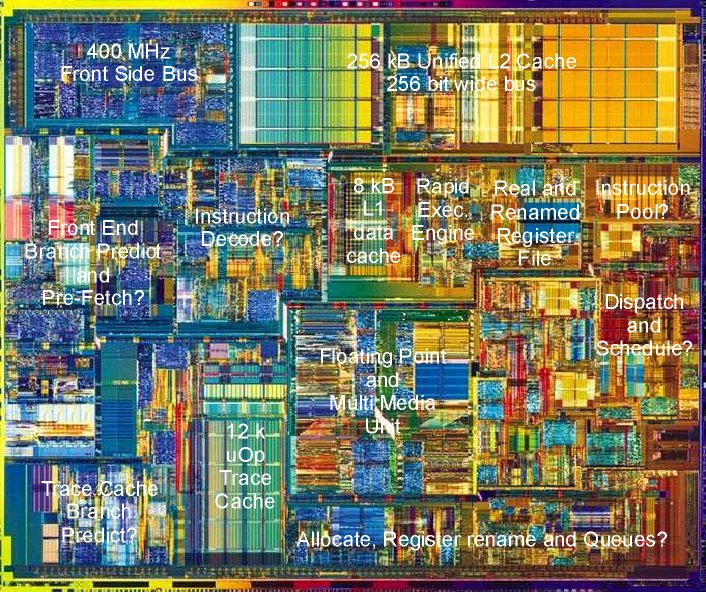

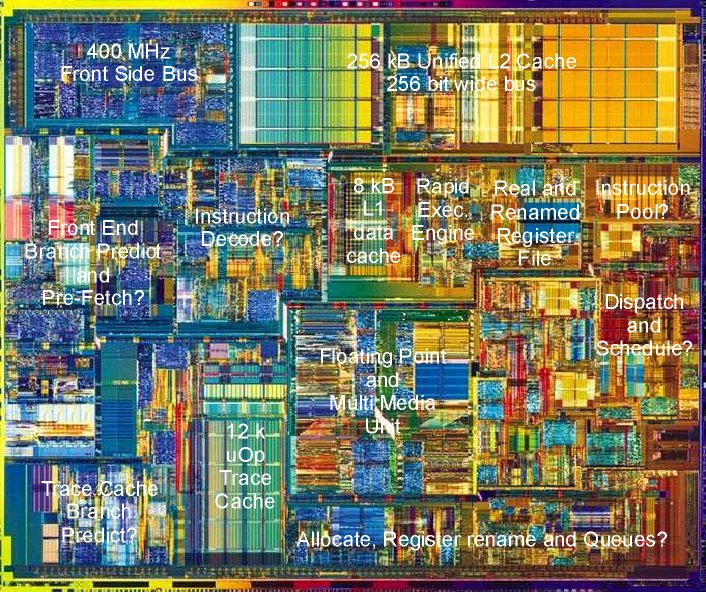

| A few more Pentium 4 facts are

becoming available from the IDF:

Willamette's die size has grown from 170 mm2 to 217 mm2. We estimate this to go down to circa 115 mm2 in the second half of next year if Intel migrates to its p860 - 130 nm copper process. The complete transition to 130 nm would be ready at the end of 2003. "The Oregon team has eliminated circa 33% of the branch miss-predictions of the P6" This is in line with the expectations. A 50% reduction would have been necesarry to counter balance the increase of the branch miss prediction penalty due to the 20 stage pipeline behind the Trace Cache. "The Pentium 4 L1 Data Cache latency has

been reduced 2x from 3 cycles at 1 GHz for the p6 to 2 cycles at 1.45 GHz

for the Pentium 4" Now the total L1 cache latency is still 3 cycles

for the Willamette in our opinion: One cycle to do the Effective Address

Calculation and two cycles to access the actual cache.

"The average load latency has been reduced by a factor 1.8" The average load latency stems from the mix of L1, L2 and memory latencies. The L1 latency has been discussed above. The L2 latency has not been given by Intel. Early Cachemem results indicate a similar 6 cycle latency as the P6. So the L2 latency would be in fact 1.4 to 1.5 times less for Willamette considering the increase in clock speed. "The Pentium 4 has two "double ALU's" (four in total)" This confirms our idea that the ALU's work in pairs at the normal clock frequency in a "double-pumped" fashion to give an effective throughput of 3 GHz at 1.5 GHz. See AMD's Mustang versus Intel's Willamette page 10. And now the big question: How does the P4 compare to the Pentium III Coppermine or Athlon at equal clock frequencies? Do we have the courage to do a prediction? Well O.K...Here's our best guess: 5-10% faster

for "branch-less" programs like the video encoding benchmark which handles

fixed

|